during: 2017

Machine Justification

I remember in my teens being told of the wonderful things Artificial Intelligence (AI) would do in the next few years. Now several decades later, some of these seem to be happening. The most recent triumph was of computers teaching each other to play Go by playing against each other, rapidly becoming more proficient than any human, with strategies human experts could barely comprehend. It's natural to wonder what will happen over the next few years, will computers soon have greater intelligence than humanity? (Given some recent election results, that may not be too hard a bar to cross.)

But as I hear of these, I recall Pablo Picasso's comment about computers many decades ago: “Computers are useless. They can only give you answers”. The kind of reasoning that techniques such as Machine Learning can result in are truly impressive in their results, and will be useful to us as users and developers of software. But answers, while useful, aren't always the whole picture. I learned this in my early days of school - just providing the answer to a math problem would only get me a couple of marks, to get the full score I had to show how I got it. The reasoning that got to the answer was more valuable than the result itself. That's one of the limitations of the self-taught Go AIs. While they can win, they cannot explain their strategies.

Race for the Galaxy and San Juan

San Juan and Race for the Galaxy are excellent, fast, and thoughtful card games. Race is deeper and its icons make it less approachable for some.

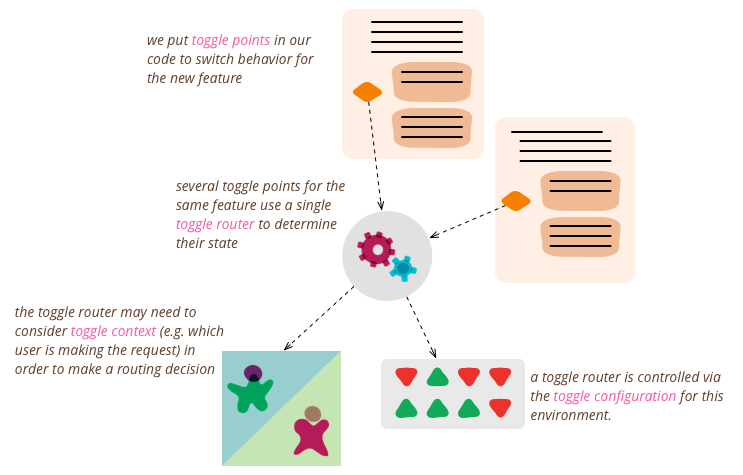

Feature Toggles (aka Feature Flags)

Feature Toggles (often also refered to as Feature Flags) are a powerful technique, allowing teams to modify system behavior without changing code. They fall into various usage categories, and it's important to take that categorization into account when implementing and managing toggles. Toggles introduce complexity. We can keep that complexity in check by using smart toggle implementation practices and appropriate tools to manage our toggle configuration, but we should also aim to constrain the number of toggles in our system.

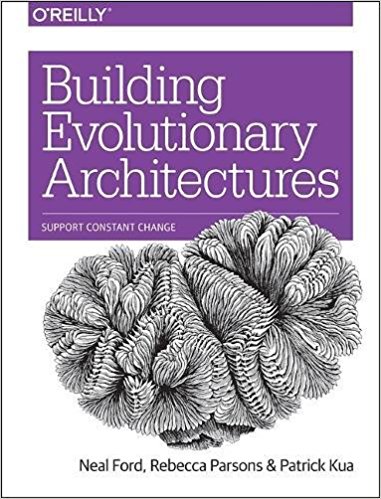

Foreword to Building Evolutionary Architectures

Recently my colleagues: Neal Ford, Rebecca Parsons, and Pat Kua, wrote a book entitled “Building Evolutionary Architectures”. I was honored that they asked me to write the foreword.

Roy sells Thoughtworks

Thoughtworks is acquired by Apax Funds. The current management team will continue to run the company as before.

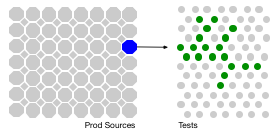

The Rise of Test Impact Analysis

Test Impact Analysis (TIA) is a modern way of speeding up the test automation phase of a build. It works by analyzing the call-graph of the source code to work out which tests should be run after a change to production code. Microsoft has done some extensive work on this approach, but it's also possible for development teams to implement something useful quite cheaply.

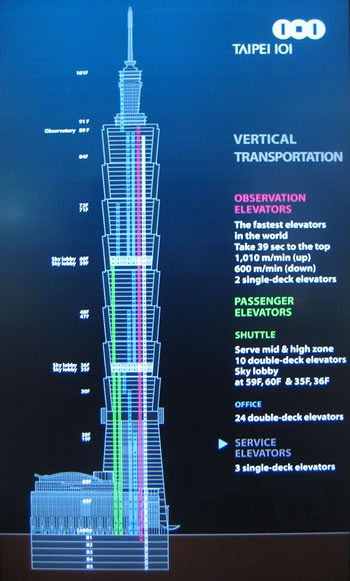

The Architect Elevator — Visiting the upper floors

Many large organizations see their IT engine separated by many floors from the executive penthouse, which also separates business and digital strategy from the vital work of carrying it out. The primary role of an architect is to ride the elevators between the penthouse and engine room, stopping wherever is needed to support these digital efforts: automating software manufacturing, minimizing up-front decision making, and influencing the organization alongside technology evolution.

Podcast on Agility and Architecture

Ryan Lockard (Agile Uprising) invited me to join Rebecca Wirfs-Brock for a podcast conversation on architecture on agile projects. Rebecca developed Responsibility-Driven Design, which was a big influence for me when I started my career. We talked about how we define architecture, the impact of tests on architecture, the role of domain models, what kind of documentation to prepare, and how much architecture needs to be done up-front.

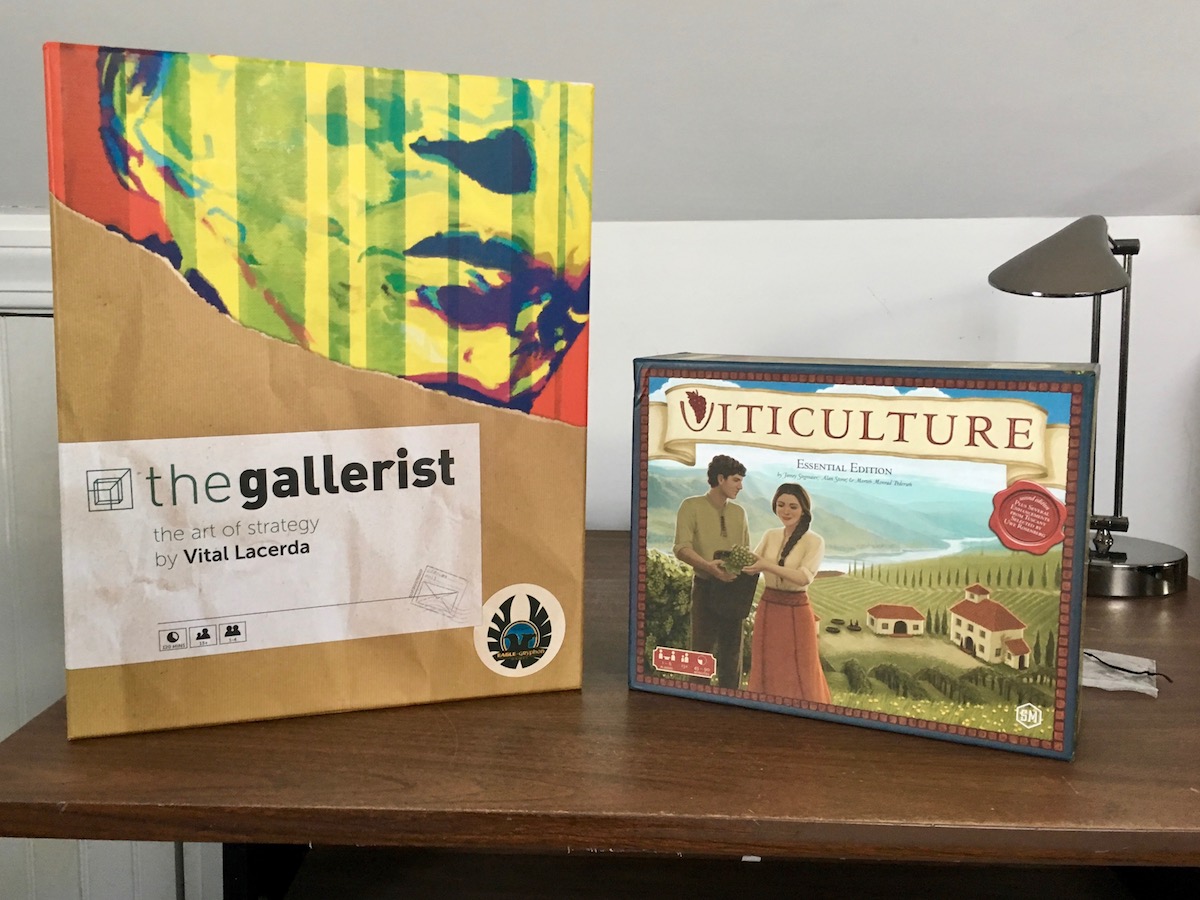

Viticulture and The Gallerist

Viticulture and The Gallerist are both excellent Eurogames with a strong theme of a production centered business.

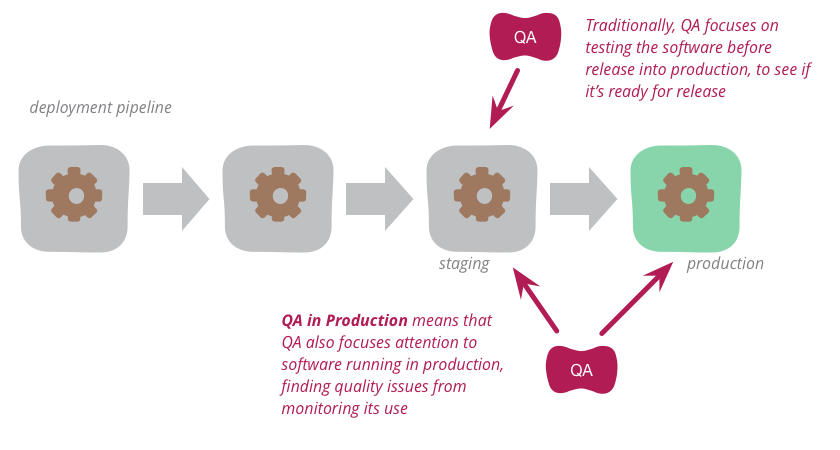

QA in Production

Traditionally, QA focuses on testing the software before release into production to see if it's ready for such release. But increasingly, modern QA organizations are also focusing attention onto the software running in production. By analyzing logs and other monitoring tools, they find quality problems to highlight to the development organization. This approach works particularly well with organizations that use continuous delivery to put new versions of the software into production rapidly and reliably.

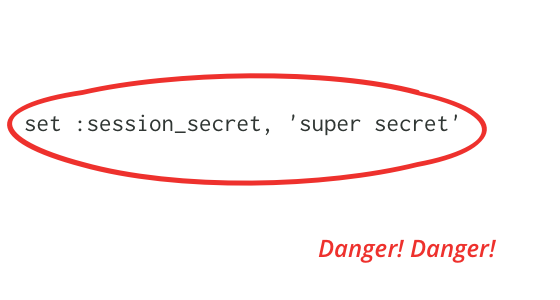

One Line of Code that Compromises Your Server

A session secret is a key used for encrypting cookies. Application developers often set it to a weak key during development, and don't fix it during production. This article explains how such a weak key can be cracked, and how that cracked key can be used to gain control of the server that hosts the application. We can prevent this by using strong keys and careful key management. Library authors should encourage this with tools and documentation.

Self Encapsulation

Data encapsulation is a central tenet in object-oriented style. This says that the fields of an object should not be exposed publicly, instead all access from outside the object should be via accessor methods (getters and setters). There are languages that allow publicly accessible fields, but we usually caution programmers not to do this. Self-encapsulation goes a step further, indicating that all internal access to a data field should also go through accessor methods as well. Only the accessor methods should touch the data value itself. If the data field isn't exposed to the outside, this will mean adding additional private accessors.

Function As Object

In programming, the fundamental notion of an object is the bundling of data and behavior. This provides a common data context when writing a set of related functions. It also provides an interface to manipulating the data that allows the object to control access to that data, making it easy to support derived data and prevent invalid modifications of data. Many languages provide explicit syntax to define classes, which act as definitions for objects. But if you have a language with first-class functions and closures, you can use these constructs to create objects using the Function As Object pattern (originally described by Eugene Wallingford).

Recollections of Writing the Agile Manifesto

The Agile Uprising podcast has been doing a series of interviews with the authors of the Agile Manifesto. This is my turn in the interview seat. I don't remember much about the Snowbird workshop itself, but I was able to describe a bit about the context leading up to the manifesto.

What do you mean by “Event-Driven”?

Towards the end of last year I attended a workshop with my colleagues in Thoughtworks to discuss the nature of “event-driven” applications. Over the last few years we've been building lots of systems that make a lot of use of events, and they've been often praised, and often damned. Our North American office organized a summit, and Thoughtworks senior developers from all over the world showed up to share ideas.

The biggest outcome of the summit was recognizing that when people talk about “events”, they actually mean some quite different things. So we spent a lot of time trying to tease out what some useful patterns might be. This note is a brief summary of the main ones we identified.

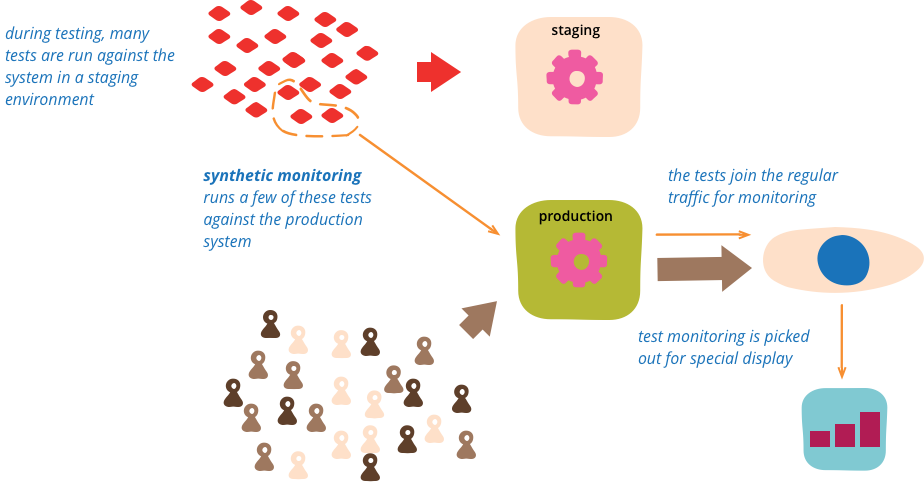

Synthetic Monitoring

Synthetic monitoring (also called semantic monitoring ) runs a subset of an application's automated tests against the live production system on a regular basis. The results are pushed into the monitoring service, which triggers alerts in case of failures. This technique combines automated testing with monitoring in order to detect failing business requirements in production.

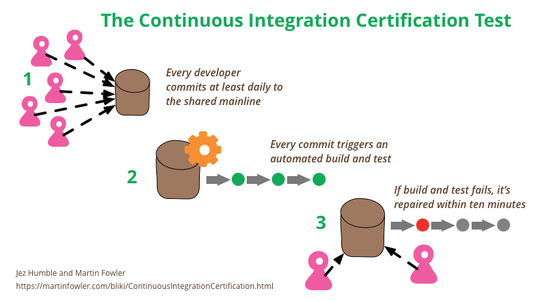

Continuous Integration Certification

Continuous Integration is a popular technique in software development. At conferences many developers talk about how they use it, and Continuous Integration tools are common in most development organizations. But we all know that any decent technique needs a certification program — and fortunately one does exist. Developed by one of the foremost experts in continuous delivery and devops, it’s known for being remarkably rapid to administer, yet very insightful for its results. Although it’s quite mature, it isn’t as well known as it should be, so as a fan of the technique I think it’s important for me to share this certification program with my readers. Are you ready to be certified for Continuous Integration? And how will you deal with the shocking truth that taking the test will reveal?

The Basics of Web Application Security

Modern web development has many challenges, and of those security is both very important and often under-emphasized. While such techniques as threat analysis are increasingly recognized as essential to any serious development, there are also some basic practices which every developer can and should be doing as a matter of course.